A data-driven analysis with 20+ statistics from Verizon, IBM, Cisco, KPMG, LayerX, Microsoft, the World Economic Forum, and others.

Introduction: The Threat That Lives Inside Your Own Team

When security teams think about email threats, they picture attackers on the outside trying to get in: phishing emails, malware attachments, compromised links. But a new category of email security risk is emerging from inside the organization, and it has nothing to do with malicious intent.

It is called shadow AI: the unsanctioned use of generative AI tools by employees without IT approval, governance, or oversight. And it is happening at a scale that most security leaders do not fully appreciate.

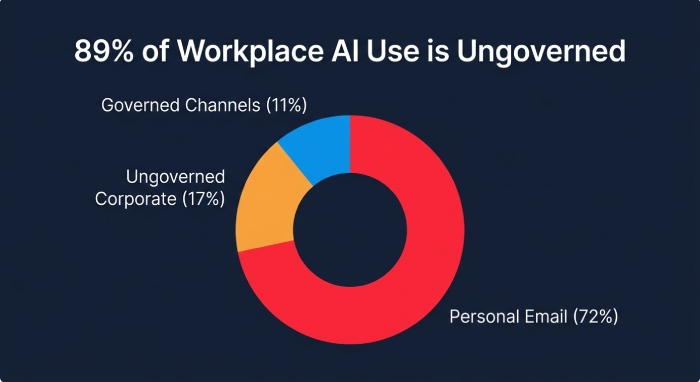

According to the Verizon 2025 Data Breach Investigations Report (DBIR), 15 percent of employees are routinely accessing generative AI systems on their corporate devices at least once every 15 days. Of those employees, 72 percent are using personal, non-corporate email accounts to authenticate, and another 17 percent use corporate emails without integrated authentication systems like SSO. That means only 11 percent of employees using GenAI at work are doing so through properly governed corporate channels.

The IBM Cost of a Data Breach Report 2025 puts a dollar figure on the problem: shadow AI was a contributing factor in 20 percent of breaches, adding an average of $670,000 to breach costs. And 63 percent of organizations have no AI governance policies at all.

This article explains what shadow AI is, how it creates email security vulnerabilities, what the data shows about the scale of the problem, and what organizations can do to bring it under control without killing productivity.

1. The Scale of the Shadow AI Problem

Employees Are Using AI Everywhere, With Almost No Oversight

The KPMG Shadow AI Report (2025) found that up to 58 percent of employees are using AI productivity tools on a daily basis. Many executives would be shocked to know how pervasive the problem is within their own organizations. Employees resort to shadow AI because it is faster, easier, and less restrictive than their company’s official tools. The competitive pressure to be productive, combined with the consumer-grade accessibility of tools like ChatGPT, Claude, and Gemini, means that employees are making individual risk decisions that IT teams cannot see.

The MIT State of AI in Business 2025 Report found a striking disconnect: while only 40 percent of companies say they have purchased an official LLM subscription, workers from over 90 percent of the companies surveyed reported regular use of AI tools. The professionals expressing the most skepticism about enterprise AI tools were often the heaviest users of consumer AI interfaces in their personal workflows. Shadow AI is not a fringe behavior. It is the dominant way most knowledge workers interact with AI today.

The Cisco 2025 Cybersecurity Readiness Index reinforces the visibility crisis: 60 percent of IT teams say they cannot see specific prompts or requests made by employees using GenAI tools. And 60 percent lack confidence in their ability to identify the use of unapproved AI tools in their environments. Twenty-two percent of companies allow completely unrestricted access to public GenAI tools.

Only 11% of employees using GenAI at work access it through properly governed corporate channels. 72% use personal email accounts, and 17% use corporate email without SSO. — Verizon 2025 DBIR

GenAI Is Now the Number One Data Exfiltration Vector

The LayerX Enterprise AI and SaaS Data Security Report 2025 provides some of the most granular data available on how shadow AI drives data leakage. According to their research, GenAI now accounts for 32 percent of all corporate-to-personal data exfiltration, making it the number one vector for corporate data movement outside enterprise control. On average, employees make 14 pastes per day using non-corporate accounts, of which at least three contain sensitive data. Forty percent of uploads into GenAI tools include PII or PCI data. And 67 percent of AI usage occurs through unmanaged personal accounts.

The report found that 87 percent of instant messaging activity happens through unmanaged, non-corporate accounts, and chat platforms are the top hotspot for sensitive data exposure, with 62 percent of data leaks occurring through them. When employees copy email content, customer data, or contract language into an AI chatbot accessed through a personal account, that data leaves the organization’s control entirely.

2. How Shadow AI Creates Email Security Vulnerabilities

Shadow AI intersects with email security in three specific, measurable ways:

Vulnerability 1: Sensitive Email Content Pasted Into AI Tools

The most direct risk is employees copying sensitive email content, including customer data, financial information, contract terms, credentials, and internal communications, into AI chatbots to draft responses, summarize threads, or analyze information. Because 72 percent of employees using GenAI do so through personal email accounts (Verizon DBIR 2025), this data flows through channels that are completely invisible to corporate DLP, email archiving, and compliance monitoring systems.

The KPMG report specifically warns that unauthorized AI tool usage can expose client-confidential data, intellectual property, financial records, personal identifiers, and legal documents. When employees paste email content into an AI tool, they are effectively exfiltrating data through a channel that most email security stacks are not designed to monitor.

Vulnerability 2: AI-Generated Responses That Introduce Risk

When employees use AI to draft email responses, they introduce a second category of risk: the potential for AI-generated content to contain inaccurate information, hallucinated data, or inadvertently disclosed confidential information from the model’s context window. If an employee pastes a client’s confidential contract into an AI tool to generate a summary and then emails that summary to a different client, the resulting data breach may not be detected by any inbound or outbound email filter because the content appears to be a legitimate business communication written by the employee.

Vulnerability 3: Shadow AI as a Phishing Amplifier

The Microsoft Digital Defense Report 2025 found that AI-generated phishing emails achieve a 54 percent click-through rate, 4.5 times higher than manually crafted phishing. But this same capability is available to any employee who uses GenAI to craft persuasive internal communications. An employee using shadow AI to write increasingly polished, personalized emails can inadvertently train colleagues to trust AI-generated email content, making the organization more susceptible to externally generated AI phishing when it arrives. The line between a legitimate AI-assisted email from a colleague and a malicious AI-generated phishing email from an attacker becomes impossible to distinguish.

3. The Governance Vacuum: Why Most Organizations Are Flying Blind

The IBM Cost of a Data Breach Report 2025 found that among the 600 organizations studied, 63 percent have no AI governance policies in place to manage AI or prevent shadow AI. Even among organizations that do have governance policies, less than half have an approval process for AI deployments, and 61 percent lack AI governance technologies. Only 34 percent of organizations with governance policies perform regular audits for unsanctioned AI.

The result is a measurable cost impact. Among organizations that experienced AI-related security incidents, 97 percent lacked proper AI access controls. Shadow AI added $670,000 to average breach costs. And 13 percent of organizations reported breaches specifically involving an AI model or application, with 31 percent of those leading to operational disruption and unauthorized access to sensitive data.

The WEF Global Cybersecurity Outlook 2026 identified 54 percent of respondents citing limited knowledge and skills as the key obstacle to adopting AI-driven cybersecurity solutions. The Accenture State of Cybersecurity Resilience 2025 found that 77 percent of organizations lack the ability to defend against AI-enabled threats, and 83 percent have not established a secure cloud foundation with integrated monitoring for AI workloads.

63% of organizations have no AI governance policies. 97% of organizations with AI-related breaches lacked proper AI access controls. Shadow AI adds $670,000 to average breach costs. — IBM Cost of a Data Breach Report 2025

4. What Email Security Has to Do With It: The Outbound Problem

Most email security investments focus on inbound threats: stopping phishing, malware, and spam before they reach employees. But shadow AI creates an outbound problem that most email security architectures are not designed to address.

When an employee copies sensitive email content, pastes it into an AI tool through a personal browser tab, generates a response, and pastes it back into their corporate email client, no inbound email filter will flag this activity. The data leakage happens through the browser and the AI platform, not through email. But the consequences cascade back into email: the employee’s outbound emails may now contain information that should never have left the organization.

This is where outbound email security and SMTP gateway controls become critical. Organizations that route outbound email through a managed SMTP service gain visibility into what is being sent, to whom, and whether outgoing messages contain sensitive data patterns that suggest unauthorized AI-assisted composition. Outbound DLP scanning at the SMTP layer can serve as a safety net to catch sensitive information being exfiltrated through AI-assisted email workflows, even when the AI interaction itself happens outside the email system.

The LayerX report specifically recommends that enterprises implement paste and prompt controls as first-class policies, monitoring both file-based and file-less data flows, because sensitive data increasingly moves through copy-paste and text inputs rather than traditional file uploads.

5. Practical Steps: Controlling Shadow AI Without Killing Productivity

The goal is not to ban AI. Employees are using these tools because they work. The goal is to bring AI usage into governed channels where it can be monitored, controlled, and protected. Here is a six-step approach:

- 1. Establish an AI acceptable use policy. Define which AI tools are approved, what types of data may and may not be entered into AI platforms, and how AI-generated content should be reviewed before being shared externally. The IBM data shows that the mere existence of a governance policy correlates with significantly lower breach costs. It does not need to be perfect on day one, but it needs to exist.

- 2. Provide approved AI tools so employees do not seek unauthorized alternatives. The MIT research found that shadow AI usage is highest when official enterprise AI tools are slow, restrictive, or nonexistent. If you give employees a governed, capable AI tool, they are far less likely to use personal accounts on consumer platforms. The friction of the alternative is the best enforcement mechanism.

- 3. Implement outbound email scanning and DLP at the SMTP gateway. Since shadow AI interactions often result in sensitive data being included in outbound emails, SMTP-level scanning can catch patterns like customer PII, financial data, or credentials appearing in outgoing messages. This does not prevent the AI interaction itself, but it catches the downstream consequences.

- 4. Enforce email authentication (DMARC, SPF, DKIM) to protect against AI-generated impersonation. As employees grow accustomed to receiving polished, AI-assisted emails from colleagues, the bar for what looks legitimate rises. DMARC enforcement on your domain ensures that attackers cannot exploit this normalization by spoofing your domain to send AI-generated phishing to your contacts.

- 5. Audit GenAI usage across corporate endpoints. The Cisco data shows that 60 percent of IT teams are blind to employee AI usage. Use endpoint monitoring to identify which AI services employees are accessing, and through which authentication methods. Flag any usage through personal email accounts as a policy violation requiring remediation.

- 6. Train employees on the specific risks of AI data leakage, not just phishing. Most security awareness training focuses on recognizing inbound threats. Employees also need to understand that pasting client data, financial records, or internal communications into an AI tool is a data exfiltration event, regardless of intent. The KPMG report emphasizes that employees often do not realize the security implications of using consumer AI tools for work tasks.

Conclusion: Your Inbox Is a Two-Way Street

The email security conversation has historically been about defense: keeping bad things out. Shadow AI forces a fundamental expansion of that conversation to include what is going out.

The data is stark. Fifteen percent of employees are feeding corporate data into AI tools through ungoverned channels. GenAI is now the number one vector for corporate data exfiltration. Sixty-three percent of organizations have no AI governance policies. And shadow AI adds $670,000 to average breach costs when incidents occur.

But the data also shows that governance works. Organizations with AI policies in place experience measurably lower breach costs. Organizations that provide approved AI tools see less shadow usage. And outbound email controls at the SMTP gateway can catch sensitive data leaking through AI-assisted workflows before it reaches external recipients.

Email security in 2026 is no longer just about what arrives in your inbox. It is equally about what leaves it. The organizations that recognize this shift and extend their security architecture to cover outbound data flows, AI governance, and employee behavior will be the ones that harness AI’s productivity benefits without becoming its next security statistic.

References & Sources

All statistics cited in this article are from primary-source institutional reports. Full URLs provided.

[1] Verizon, 2025 Data Breach Investigations Report (DBIR) [PDF]

https://www.verizon.com/business/resources/reports/dbir/

[2] IBM / Ponemon Institute, Cost of a Data Breach Report 2025 [PDF]

https://www.bakerdonelson.com/webfiles/Publications/20250822_Cost-of-a-Data-Breach-Report-2025.pdf

[3] Cisco, 2025 Cybersecurity Readiness Index [PDF]

[4] KPMG, Shadow AI Is Already Here: Take Control, Reduce Risk, and Unleash Innovation (2025) [PDF]

[5] LayerX, Enterprise AI and SaaS Data Security Report 2025 [PDF]

https://go.layerxsecurity.com/hubfs/LayerX_Enterprise_AI_and_SaaS_Data_Security_Report.pdf

[6] Microsoft, Digital Defense Report 2025 [PDF]

[7] World Economic Forum, Global Cybersecurity Outlook 2026 [PDF]

https://reports.weforum.org/docs/WEF_Global_Cybersecurity_Outlook_2026.pdf

[8] Accenture, State of Cybersecurity Resilience 2025 [PDF]

[9] MIT / MLQ, State of AI in Business 2025 Report [PDF]

https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf

[10] NSA / DoD, Joint Cybersecurity Information: AI Data Security (May 2025) [PDF]

https://media.defense.gov/2025/May/22/2003720601/-1/-1/0/CSI_AI_DATA_SECURITY.PDF

[11] KnowBe4, Phishing Threat Trends Report Vol. 5 (March 2025) [PDF]

https://www.knowbe4.com/hubfs/Phishing-Threat-Trends-2025_Report.pdf

[12] FBI Internet Crime Complaint Center (IC3), 2024 Annual Report [PDF]